A startup with only 12 employees has secured over a billion dollars in venture funding, signaling continued investor confidence in artificial intelligence despite growing skepticism about current technologies. The company, Advanced Machine Intelligence Labs (AMI Labs), was founded by Yann LeCun, who left his role as chief AI scientist at Meta late last year.

LeCun has publicly challenged the dominant paradigm of large language models (LLMs), arguing that they are not the path to meaningful, long-term AI progress. Instead, he proposes a modular architecture composed of specialized components, each trained for specific domains and roles.

A Different Architecture for AI

AMI Labs plans to remain a pure research organization for at least five years, with no immediate expectation of producing a saleable product. The team is focusing on systems built from multiple discrete modules rather than a single, general-purpose language model.

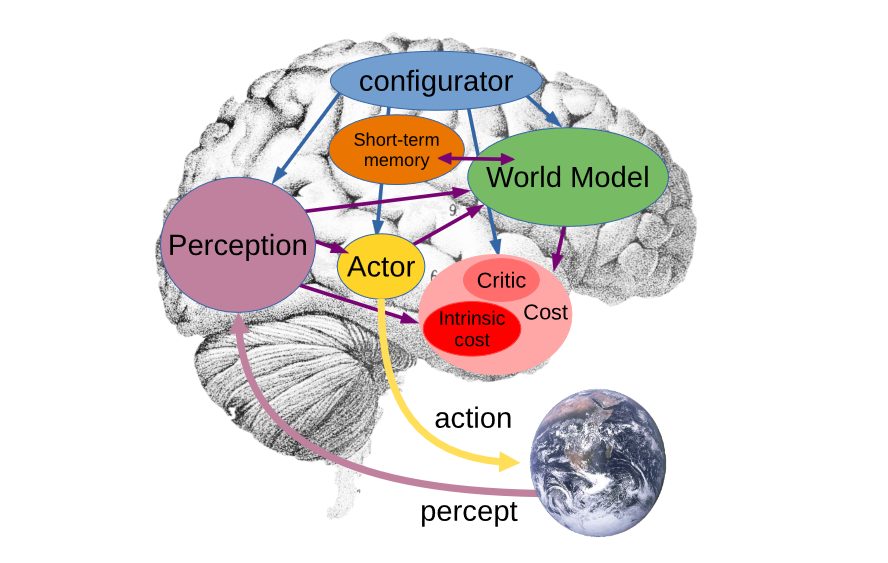

According to LeCun, his proposed system includes a world model specific to the AI’s operating domain, an actor that proposes next steps using reinforcement learning, a critic that evaluates these steps against hard-coded rules, a perception system tailored to the AI’s sensory input (video, audio, text, or images), a short-term memory, and a configurator that orchestrates information flow among modules.

Directed Data, Not Internet Scrapes

Unlike LLMs trained on vast, unfiltered internet text, each instance of LeCun’s AI would receive directed data relevant only to its environment and purpose. The importance of each module can be adjusted per application, for example, the critic module would be more comprehensive in sensitive information contexts, while the perception module would be paramount in real-time reaction systems.

Past successes of this approach include machine-learning systems that taught themselves to play video and board games, which rely on modular, domain-specific training rather than general knowledge.

Financial Implications for the AI Industry

The financial implications of a modular approach are significant. LLMs from major providers such as Anthropic, Meta, OpenAI, and Google have consumed exponentially more resources with each iteration. Recursive prompting and reasoning models have further increased training and inference costs, making it difficult for any but the largest enterprises to run these models profitably.

AMI Labs’ specialized modules could run on a fraction of the GPU power currently required for giant LLMs, or even on-device. Instead of hundreds of billions of parameters, specialist models may need only a few hundred million. This, combined with falling computing costs, suggests that local, cheap, and more accurate AI may be nearer than widely assumed.

A Skeptical Bet on the Future

LeCun’s strategy is partly based on his belief that current large language models cannot improve significantly enough to realize the aspirational claims made by their creators. AMI Labs offers investors a way for AI to succeed at manageable cost, using a different architecture from the norm. While it presents a different proposition from today’s AI behemoths, the message of future potential remains similar.

Observers will watch closely whether AMI Labs can deliver results within its five-year horizon. If successful, its modular approach could reshape the AI industry’s cost structure and deployment strategies, potentially challenging the dominance of LLM-based systems.